Donghoon Shin 신동훈

I am an HCI researcher and a Ph.D. candidate in Human Centered Design & Engineering at the University of Washington, advised by Gary Hsieh and Lucy Lu Wang

My research lies at the intersection of HCI and AI, where I build systems that streamline the dissemination, access, and consumption of complex documents (e.g., scholarly papers) to support real-world practices (e.g., design, healthcare). To achieve this, I draw on techniques from document understanding, UI understanding, and conversational agents

I received my B.S. in Electrical & Computer Engineering and B.A. in Information Science at Seoul National University, where I was honored to be the Presidential Science Scholar. Previously, I was a research intern at Microsoft Research, Google Research, Adobe Research, and Naver AI, and a software engineer at Elecle

Selected publications

View all >

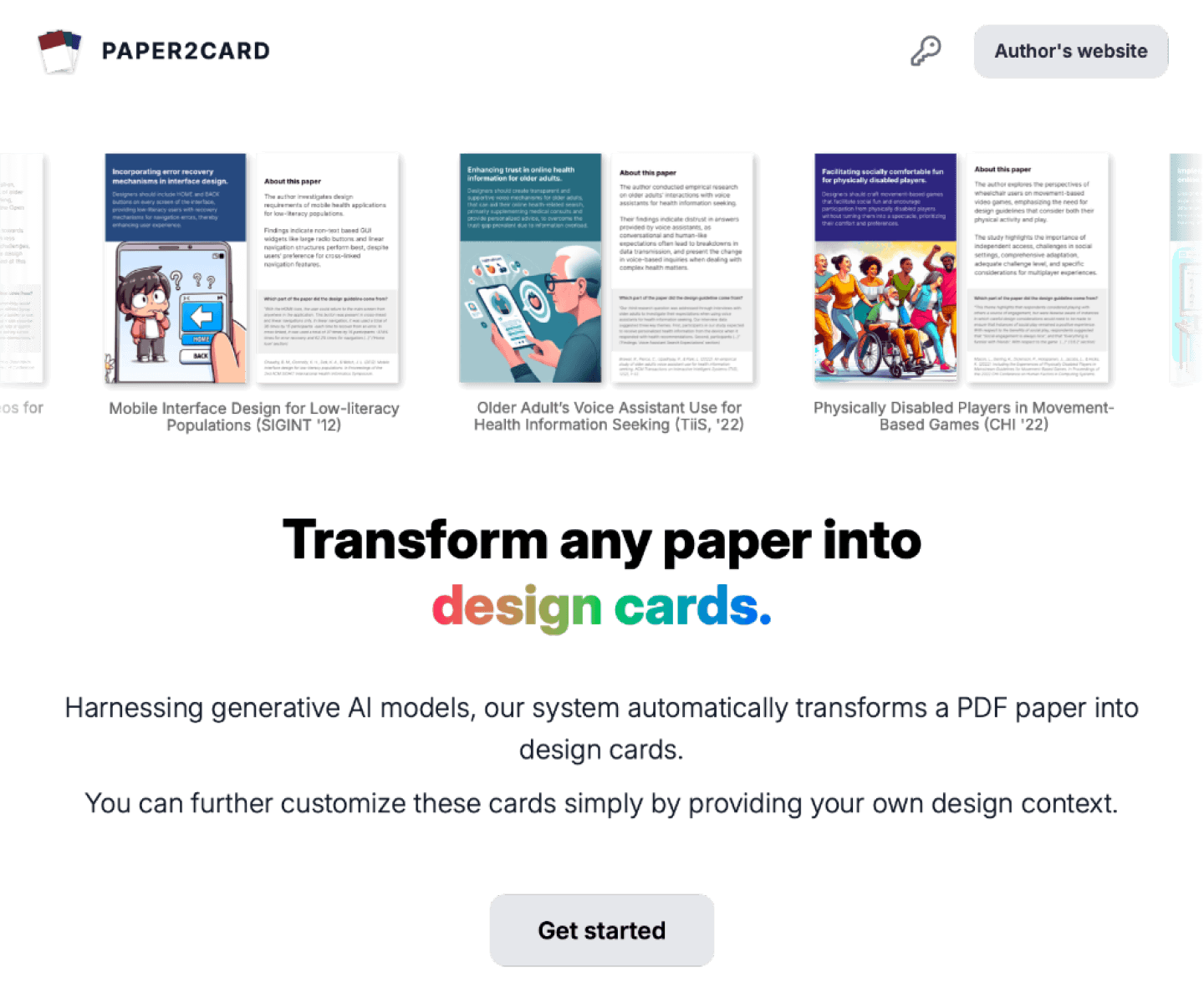

Paper2Card

Transforms HCI papers into design cards

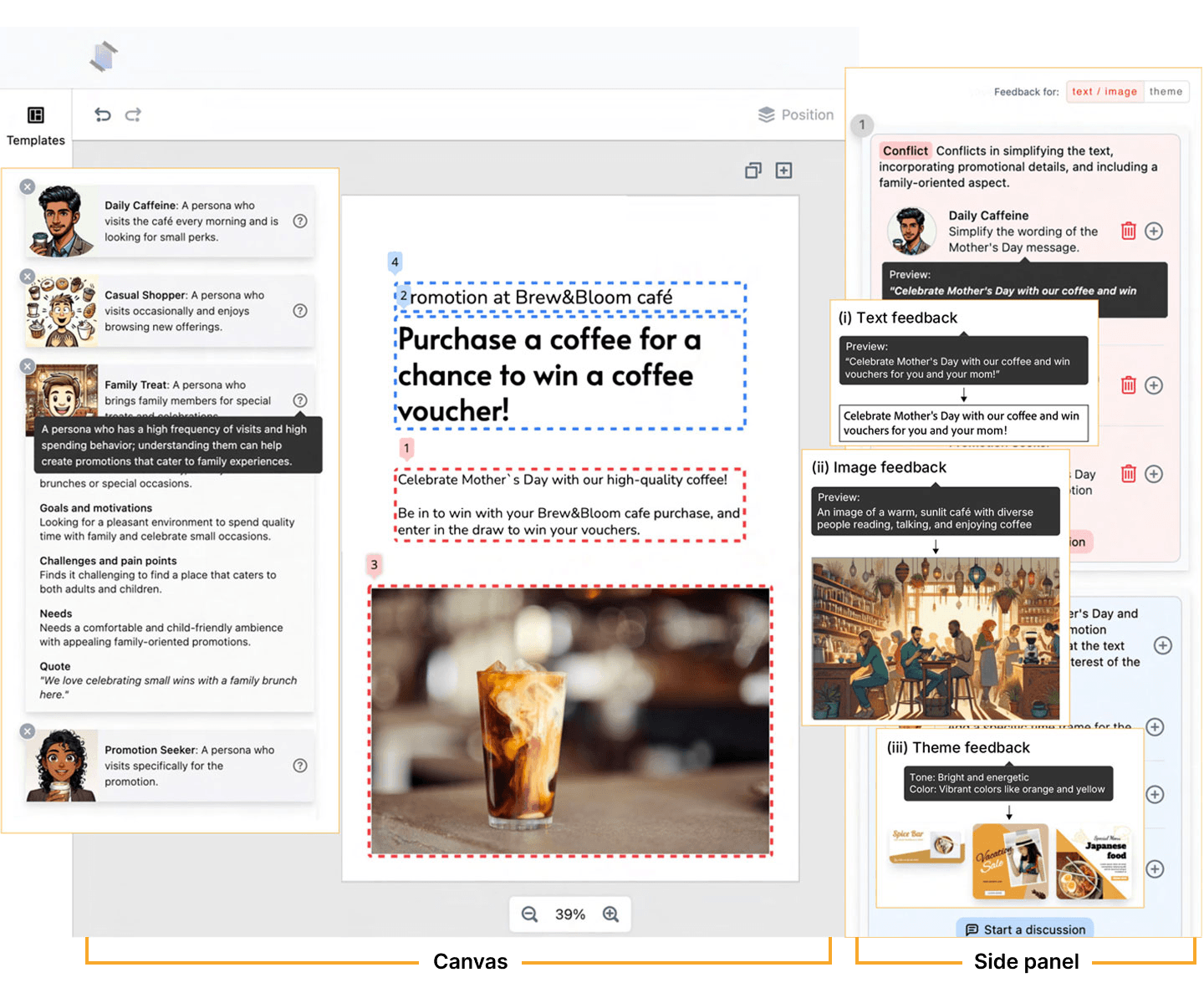

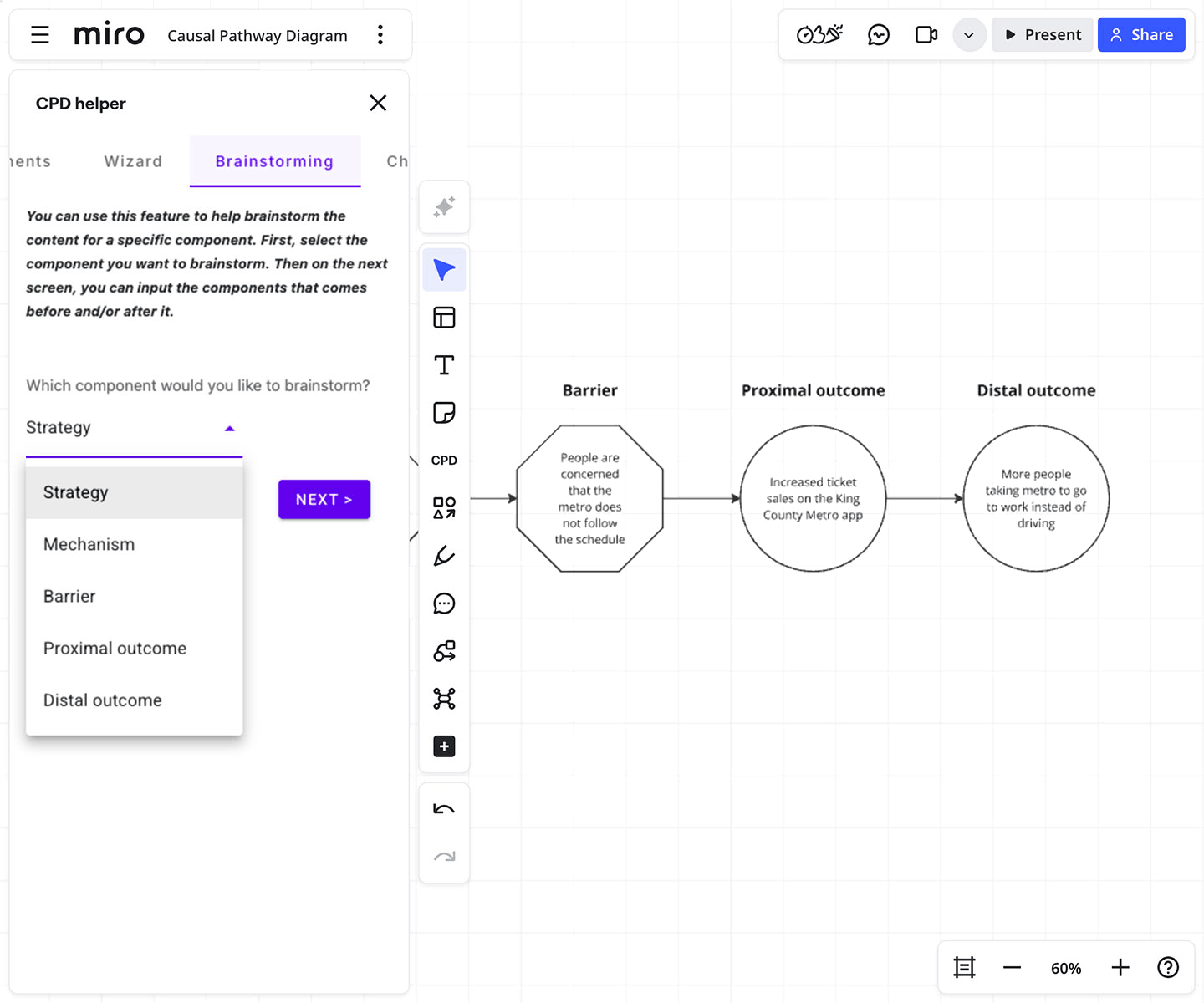

PosterMate

Multi-agent system to support simulated design collaboration

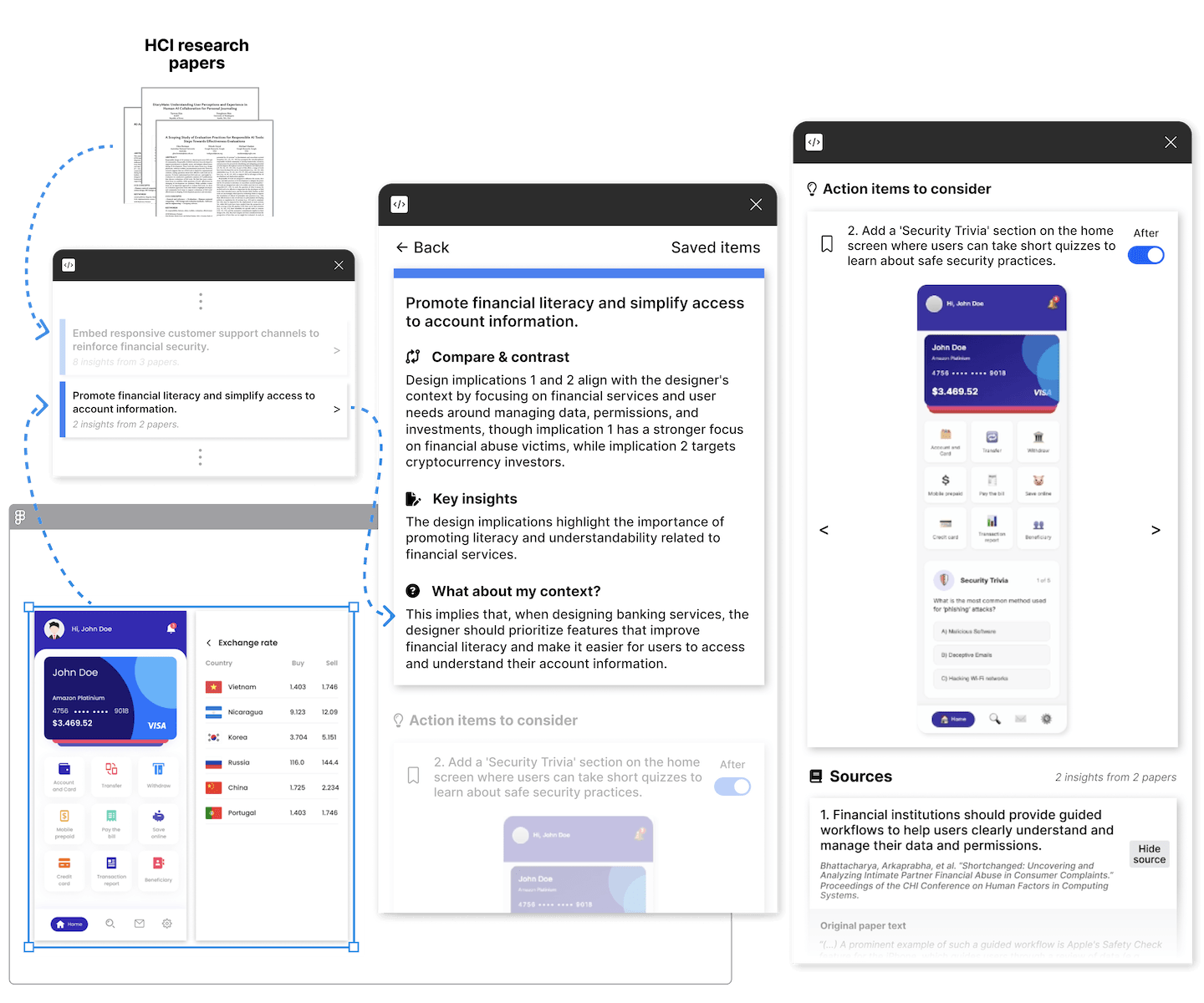

ReFinE

Turns research findings into actionable UI design support

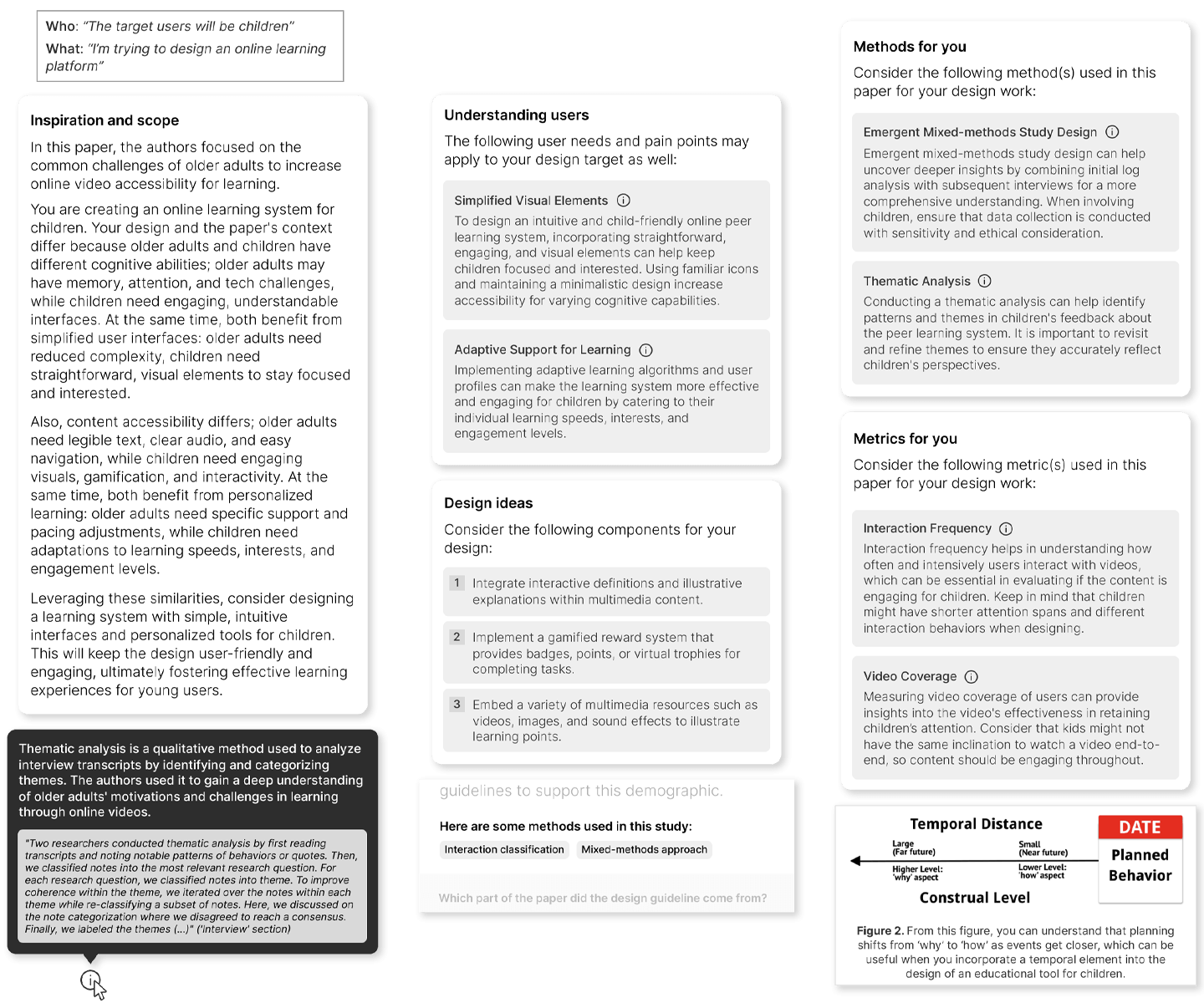

What About My Design Context?

Tailors design artifacts to designers' individual context

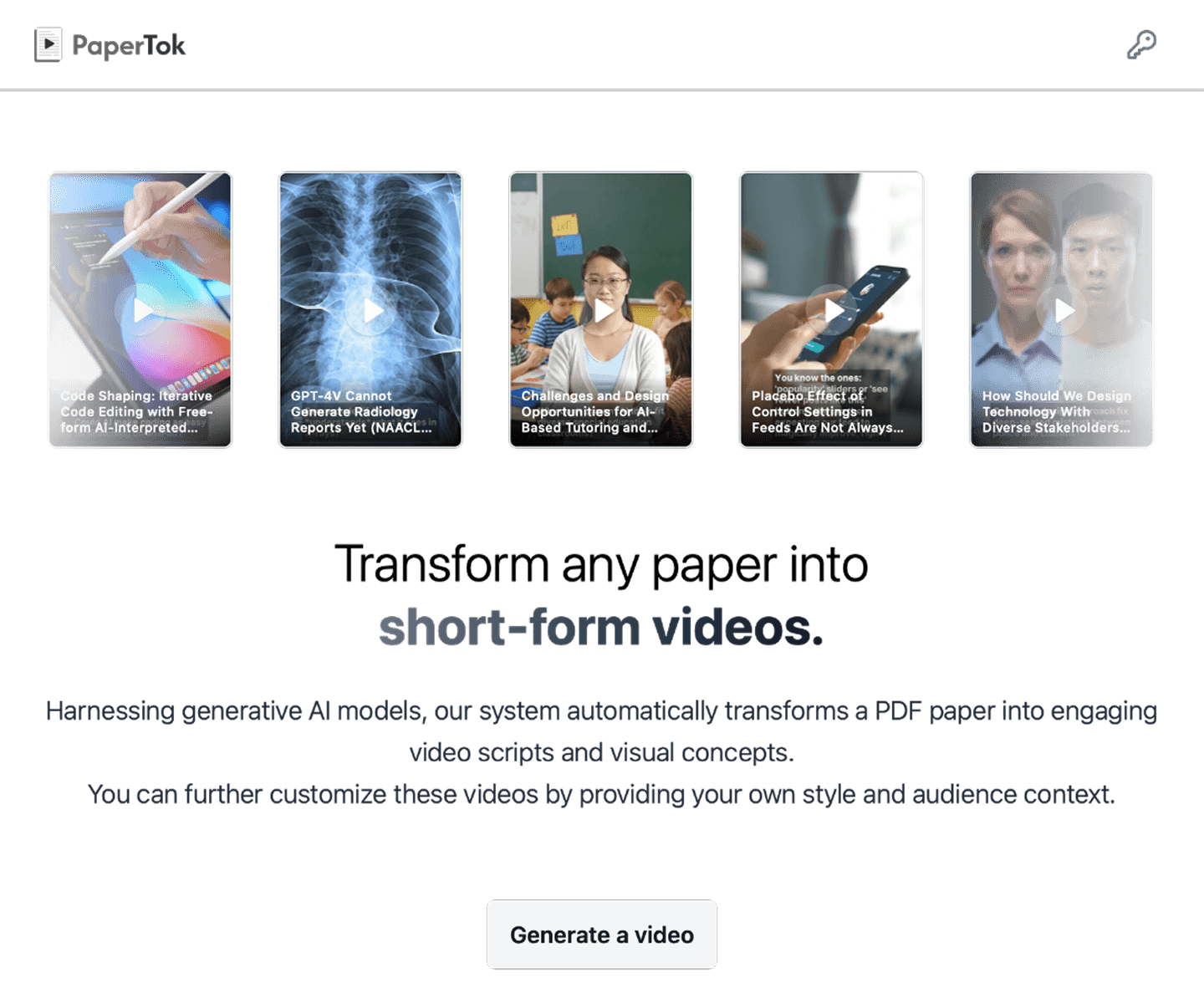

PaperTok

Transforms scholarly papers into engaging short-form videos

Theorizing design with AI

Supports casual theorizing directly within the design workflow

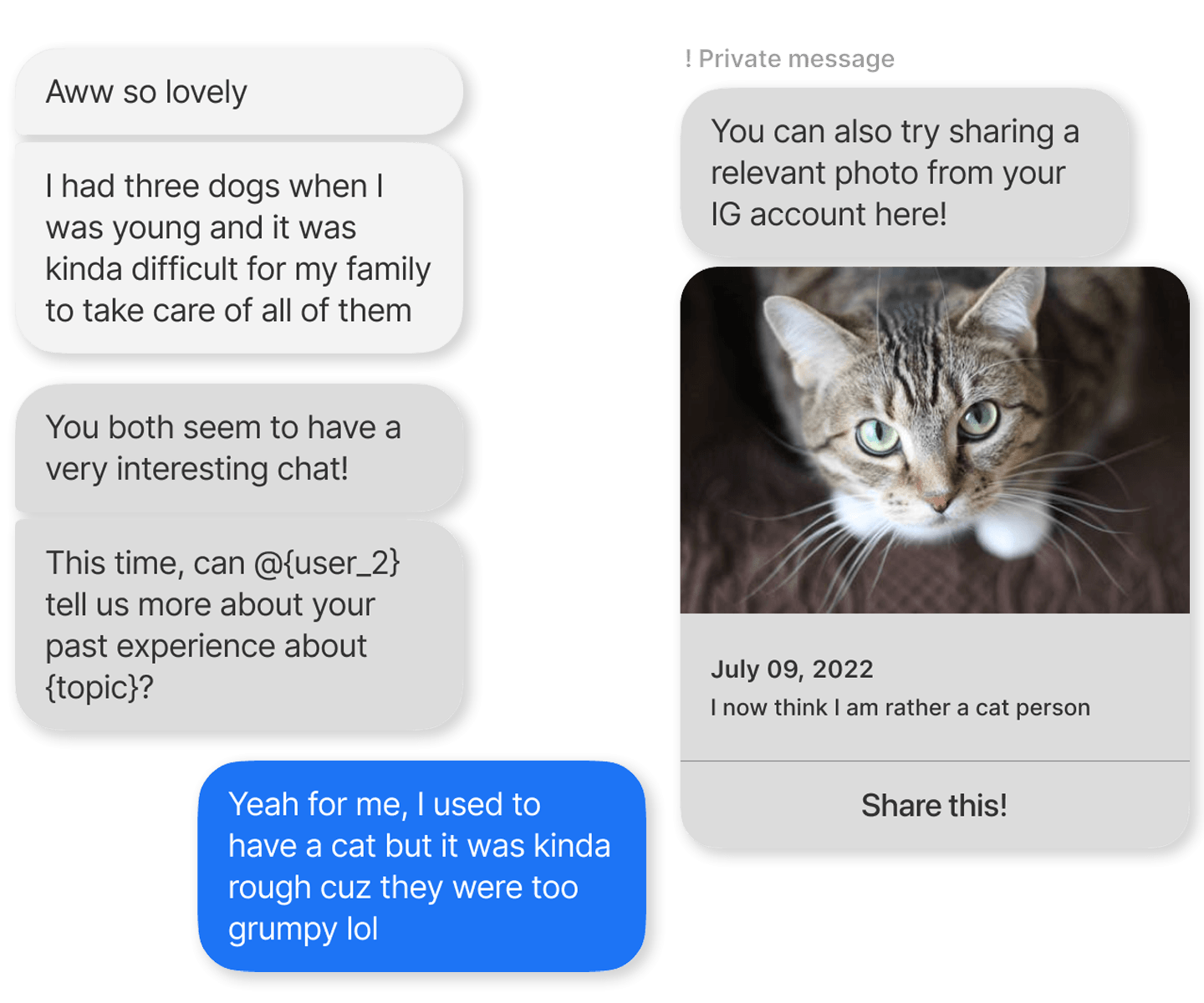

IntroBot

Chatbot to support familiarization among ad hoc teammates

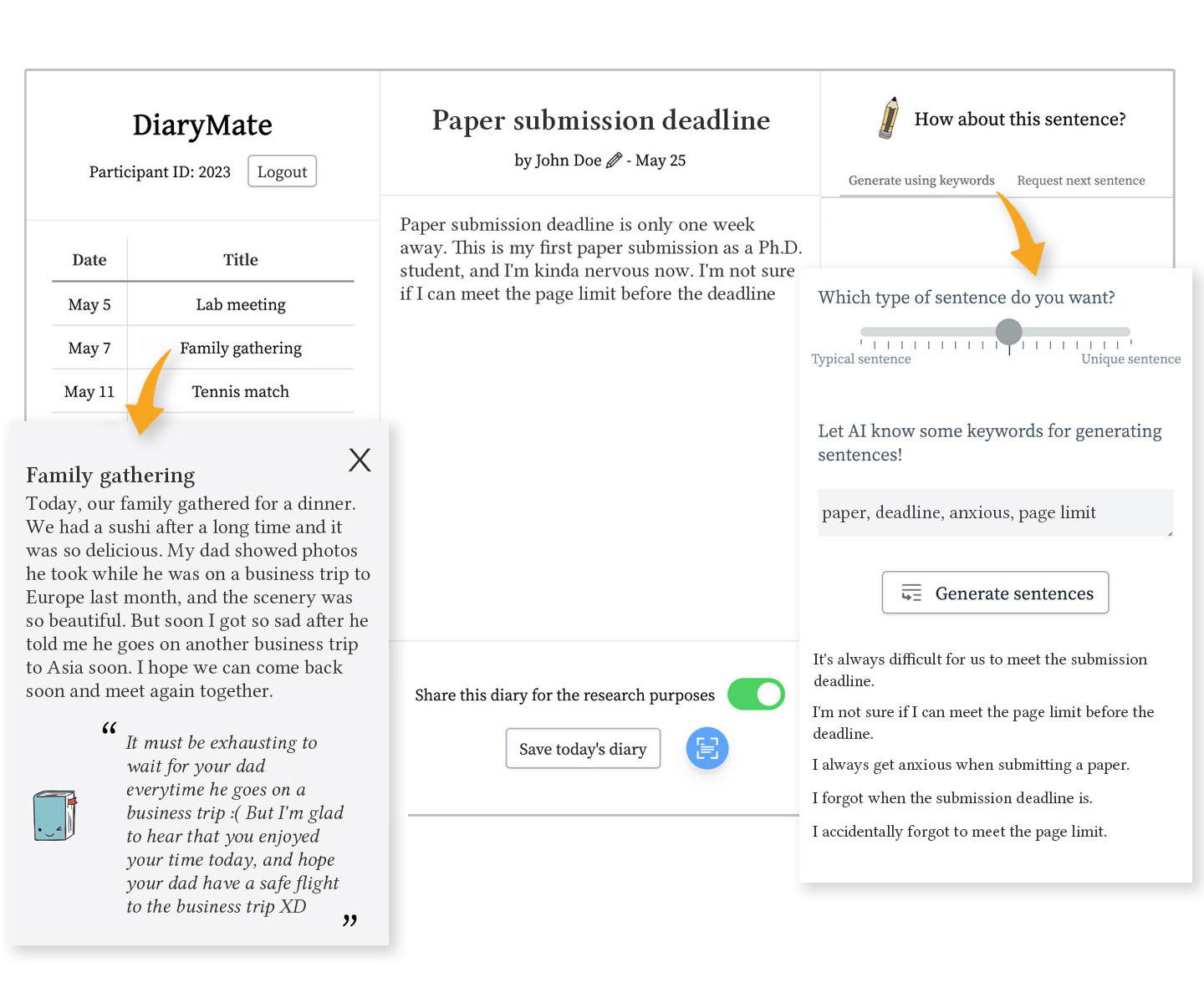

DiaryMate

LLM-infused system to support reflective writing

News

Mar 18, 2026

One paper accepted to DIS 2026

One paper on leveraging academic insights to support UI design iteration has been accepted to DIS 2026 and featured on the UW HCDE website

Jan 14, 2026

One paper accepted to CHI 2026

One paper on AI-assisted science communication has been accepted to CHI 2026

Jan 4, 2026

Papers accepted to EACL 2026 and EMNLP 2025

One paper and one workshop paper on LLM personlization have been accepted to EACL 2026 main track and EMNLP 2025 PALS Workshop

Travel & Talk

Jun 2026 SMU Singapore 🇸🇬

Jun 2026 DIS 2026 Singapore 🇸🇬

Mar 2026 Sejong University Seoul, Korea 🇰🇷

Nov 2025 Microsoft Research Remote 🇺🇸

Sep 2025 UIST 2025 Busan, Korea 🇰🇷

Jul 2025 CUI 2025 Waterloo, ON 🇨🇦

Jul 2025 DIS 2025 Madeira, Portugal 🇵🇹

Jun 2025 Microsoft Research New York, NY 🇺🇸

Jun 2025 KAIST Daejeon, Korea 🇰🇷

Jun 2025 Chung-Ang University Seoul, Korea 🇰🇷

University of Washington

University of Washington Seoul National University

Seoul National University Microsoft Research

Microsoft Research Google Research

Google Research Adobe Research

Adobe Research Naver AI

Naver AI Korean Government Scholar for Overseas Study

Korean Government Scholar for Overseas Study